Geofeeds in Practice: What We’re Seeing (and Where They Still Fall Short)

Get Unlimited Access to IPinfo Lite

Start using accurate IP data for cybersecurity, compliance, and personalization—no limits, no cost.

Sign up for freeAt a December 2025 IAB workshop, I had the opportunity to share what we’re seeing in the real world as geofeed adoption accelerates.

At IPinfo, geofeeds are already part of how we collate, categorize, and verify location signals across the internet. They are useful. They are gaining traction. But based on our measurement and pipeline experience, they are not a silver bullet.

In this post, I want to walk through what we’re observing at scale: where geofeeds are working well, where they introduce ambiguity, and what the community can do to strengthen trust in this signal going forward.

A Quick Primer: What Geofeeds Are

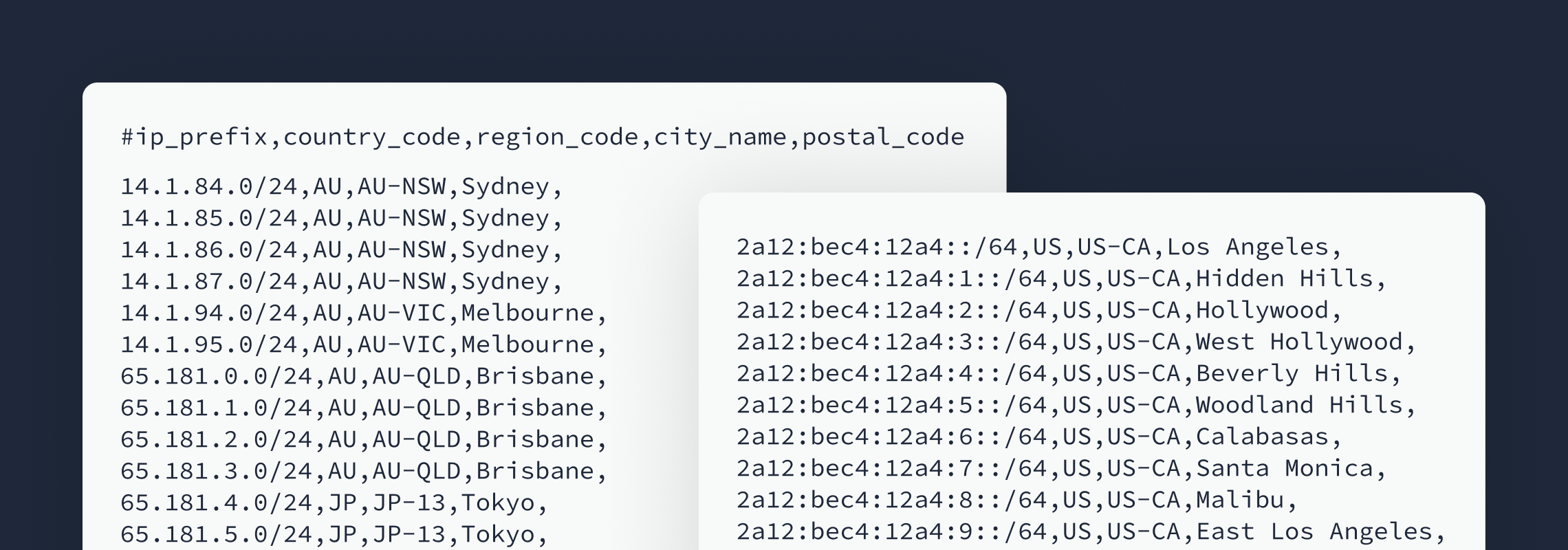

For readers less familiar, geofeeds are typically CSV files that map IP prefixes to geographic locations. The location may be expressed at different levels of granularity, including:

- Country

- Region

- City

- (Historically) postal code, which is now largely deprecated

From a data pipeline perspective, geofeeds provide a structured, operator-asserted signal about where infrastructure is believed to be located. When combined with active measurement and other evidence-based signals, they can be very valuable.

Find out more about what geofeeds are and how to set one up.

Adoption Is Growing

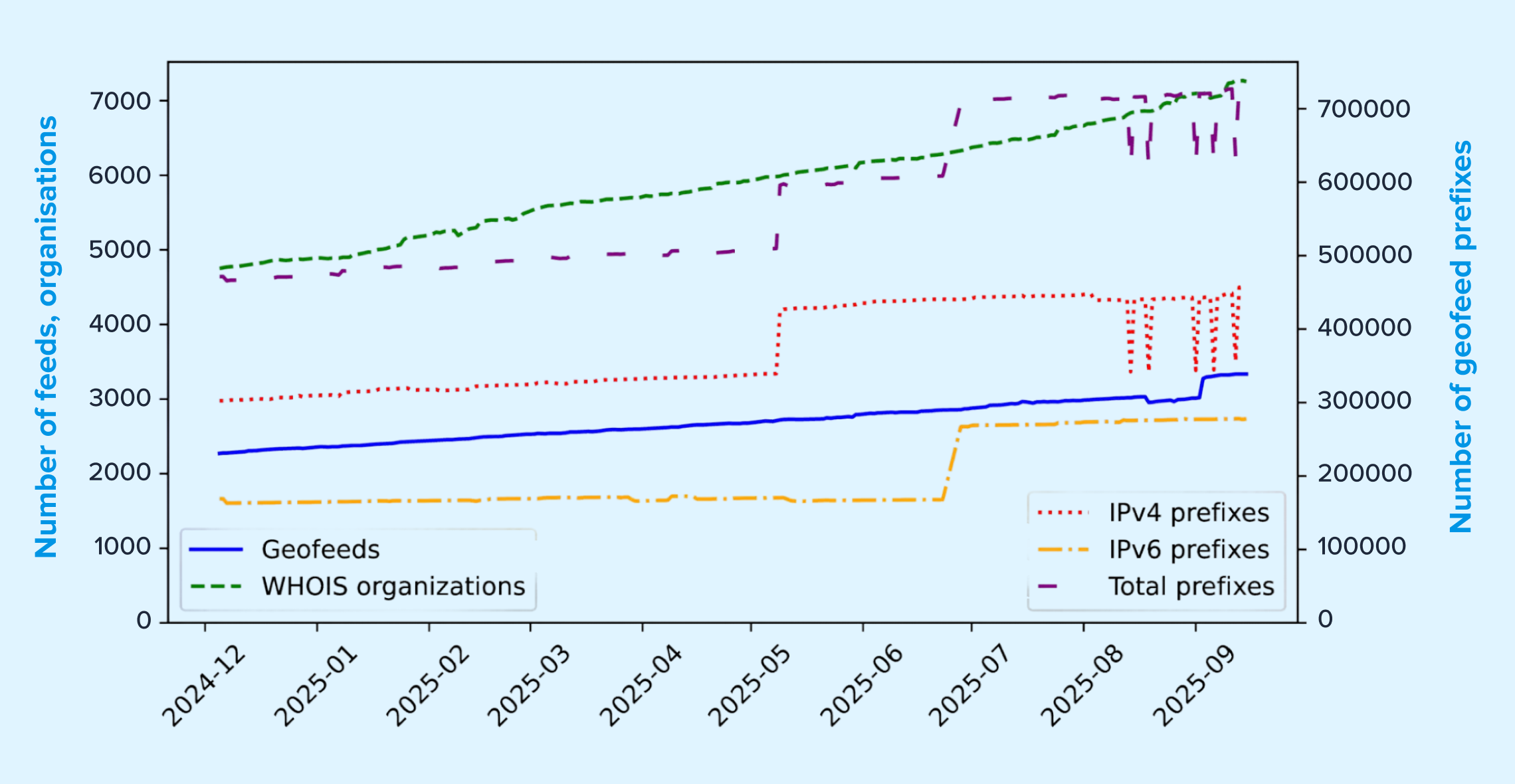

From our internal telemetry, geofeed usage is clearly increasing.

As of September 2025, we observed:

- 3,000+ geofeeds ingested into our pipeline

- 7,000+ AS organizations publishing geofeeds

- 450,000+ IPv4 prefixes referenced

- 320,000+ IPv6 prefixes referenced

The overall trajectory is upward.

However, the growth curve is not perfectly smooth. We see periodic jumps and anomalies that point to deeper quality and trust challenges, some of which I will unpack below.

Challenge #1: Ambiguous Locations, Typos, and Formatting Errors

One of the most immediate issues is basic data hygiene.

In the wild, we routinely observe:

- Full region names used instead of ISO codes

- Country and region fields swapped

- Invalid ISO region codes

- Non-standard country codes

- Prefix length typos (for example, /6 instead of /64)

- Character encoding problems

Individually, these may appear minor. At scale, they introduce real friction for consumers trying to operationalize geofeeds reliably.

Why this matters

Geofeeds are often treated as authoritative operator signals. But when location fields are ambiguous or malformed, downstream systems must either:

- Normalize aggressively (and risk misinterpretation), or

- Ignore the signal entirely

Neither outcome is ideal for teams building production workflows.

A potential path forward

One promising improvement would be the inclusion of unambiguous location identifiers, such as:

- GeoNames IDs

- H3 indices

- Other canonical geographic references

These would reduce ambiguity and improve interoperability across datasets.

Challenge #2: Incentivized or Adversarial Location Claims

Not all geofeed inaccuracies are accidental.

Some network operators have incentives to publish optimistic or misleading location information, particularly in environments like VPN infrastructure, where marketing often emphasizes the number of available locations.

Read our recent research on VPN location mismatches.

We have even seen public guides explaining how to get IP space geolocated in places like Antarctica or North Korea.

A concrete example

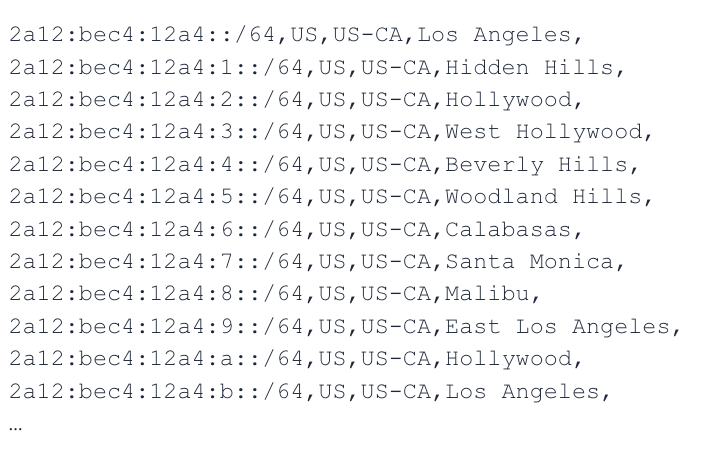

One of the largest anomalies we observed came from a single IPv6 network that published a geofeed covering:

- All 249 official country codes

- Extensive city listings per country

- ~100,000 entries for one prefix

This kind of behavior creates visible distortions in ecosystem-wide telemetry and reduces trust in operator-asserted data.

What could help

From our perspective, strengthening verification mechanisms would be valuable. For example:

- Looking glass–based validation

- Active measurement corroboration

- Community review workflows

At IPinfo, we already cross-check geofeed assertions against ProbeNet, our internet measurement platform, which gives us independent infrastructure visibility across more than 150 countries and hundreds of cities. That kind of measurement layer is increasingly important for distinguishing strong signals from questionable ones.

Challenge #3: False Precision and Granularity Inflation

Geofeed fields for region and city are optional, and for good reason. In practice, however, we frequently see prefixes assigned highly specific locations even when the underlying network architecture does not support that level of precision. A common pattern appears in:

- Large global networks

- LEO providers

- Mobile or highly dynamic infrastructure

In several cases, city fields consistently resolve to capital cities, which from a measurement standpoint is often implausible.

Why this creates risk

False granularity can be more damaging than coarse data. It gives downstream systems a false sense of confidence, which can affect:

- Fraud controls

- Compliance decisions

- Traffic routing logic

- Risk scoring workflows

What the community can do

Clearer guidance in the standard and operator education would help reinforce that:

- Region and city fields are optional

- They should only be populated when justified by the infrastructure

- Coarser but correct data is preferable to precise but misleading data

Check out my explanation on how IP geolocation data works.

Challenge #4: User Location vs. Infrastructure Location

This remains one of the most persistent sources of confusion. In many environments, including:

- LEO networks

- VPNs

- Mobile roaming

- Carrier-grade NAT

- Anycast deployments

…the user’s physical location and the IP infrastructure location can diverge significantly.

Today, geofeeds do not clearly signal which of these they represent.

Why this matters operationally

For consumers, the key question is: What exactly does this geolocation refer to?

Without that clarity, teams may apply the signal incorrectly in:

- Policy enforcement

- Regulatory workflows

- Fraud detection

- Content localization

A useful enhancement

An extension that explicitly distinguishes infrastructure location and user-affinity location (if claimed) would materially improve interpretability and downstream correctness.

Challenge #5: Stale Data and Missing Validity Windows

Another practical issue is the lack of an explicit validity period. While RFC guidance mentions HTTP caching, in practice:

- Many consumers do not rely on it

- It is out-of-band

- It is difficult to operationalize at scale

From a pipeline perspective, we often cannot determine:

- How long a geofeed entry should be trusted

- When it was last meaningfully updated

A pragmatic improvement

Embedding metadata such as TTL, created timestamp, and updated timestamp, would give consumers clearer guidance on data freshness and refetch strategy.

Challenge #6: No Clear Feedback Loop

When consumers detect issues in a geofeed today, remediation is often manual and inconsistent.

Common friction points include:

- WHOIS contacts that are not the geofeed maintainer

- No dedicated reporting channel

- Consumers forced to silently correct or ignore errors

Including dedicated contact metadata within geofeeds would make ecosystem feedback loops much healthier.

Challenge #7: Barriers to RFC-Conformant Deployment

Finally, we do observe geofeeds published outside the typical WHOIS discovery path. These tend to be less likely to be RFC-conformant, often because:

- Tooling is limited

- Validation is manual

- Deployment cost is higher than expected

Lowering the operational burden, for example, through validation services or publishing tooling, could meaningfully improve ecosystem quality.

Where This Leaves Us

At IPinfo, we view geofeeds as a valuable and increasingly important signal. They help move the ecosystem toward more transparent, operator-asserted location data.

At the same time, our measurement experience cross-validating with ProbeNet shows clear areas where the ecosystem can mature:

- Ambiguity and formatting hygiene

- Incentivized or adversarial claims

- Granularity discipline

- Clear semantics (user vs. infrastructure)

- Freshness signaling

- Feedback mechanisms

- Lower-friction deployment tooling

None of these challenges are insurmountable. But addressing them will require continued collaboration between operators, data providers, and standards bodies.

From our perspective, the goal is straightforward: Preserve what makes geofeeds powerful while strengthening the signals that make them trustworthy.

I am very interested in continuing this discussion with the community and exploring which of these proposals, or alternatives, are most practical to move forward.

About the author

As head of research at IPinfo, Oliver leads IPinfo’s research team, collaborates with academic institutions, and conducts cutting edge research.